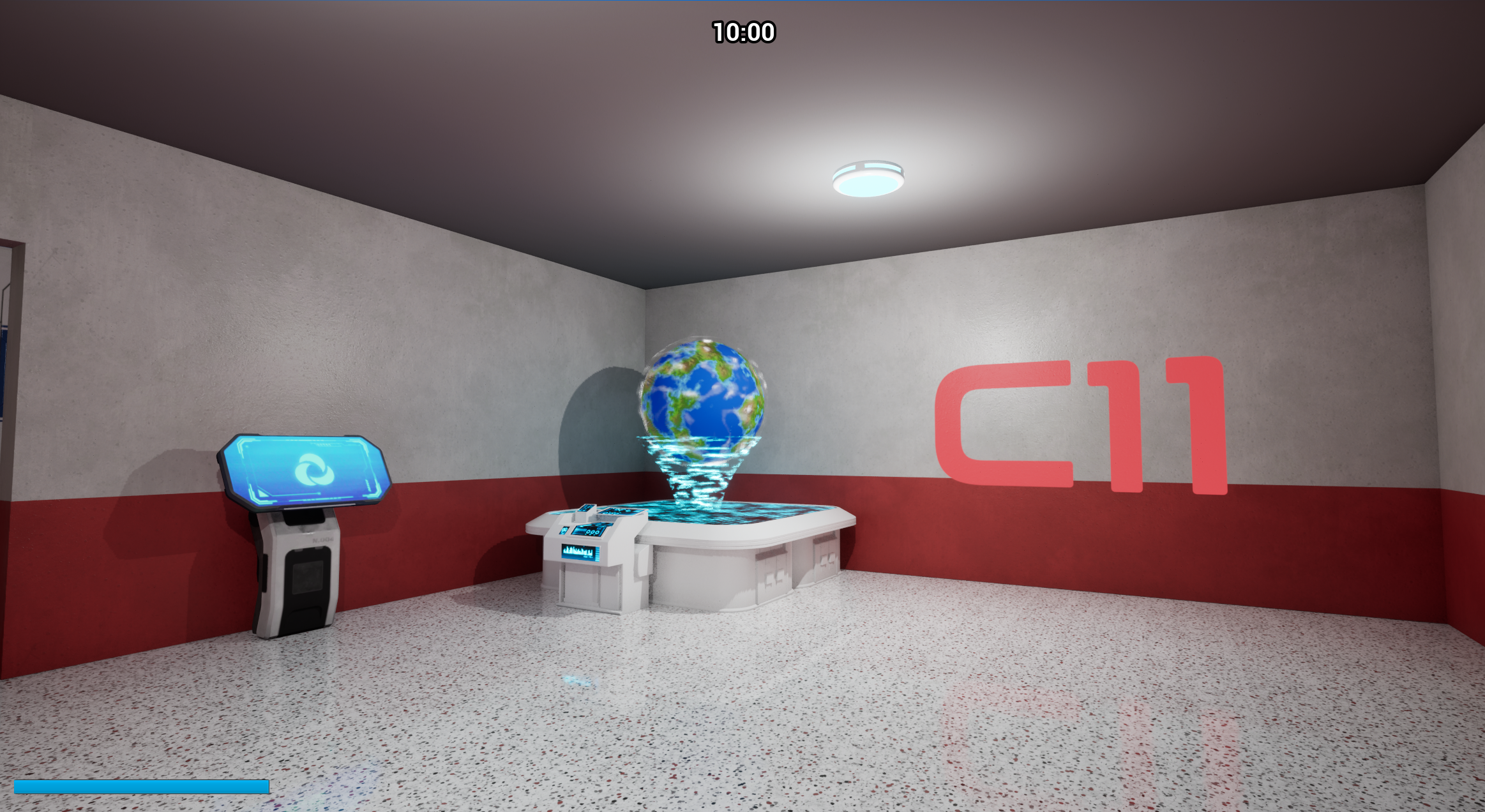

GIKO: Get In - Keep Out

Overview

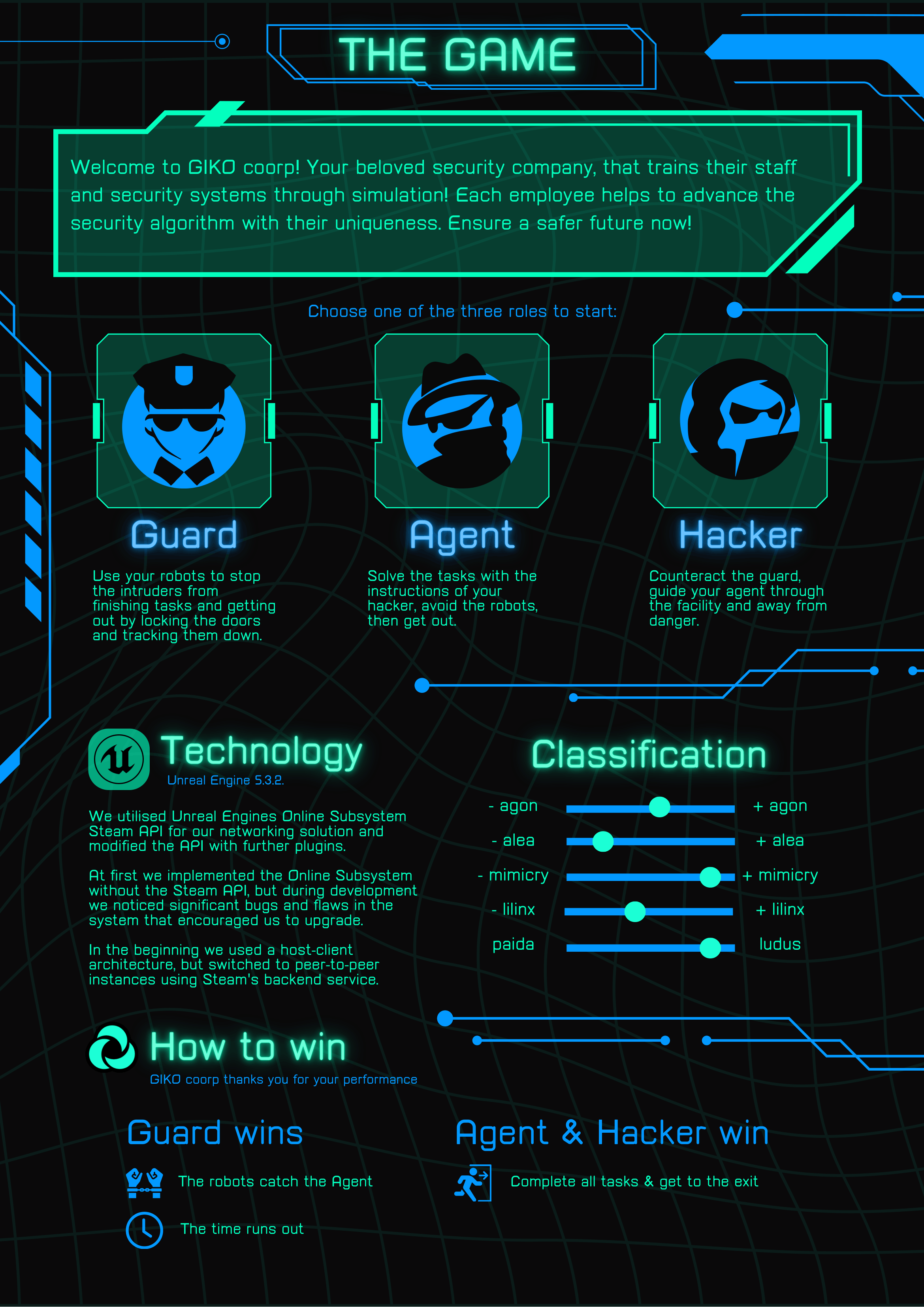

GIKO is an asymmetrical, three-player game, where players jump into the roles of an agent,

a hacker and a guard as part of the GIKO Company. The agent and hacker must work together to navigate

through the level, while the guard and the droids they control try to stop them.

The

The

The

While initially starting as a uni project, we have plans to pursue this game idea further in the

future, expanding it to a fully flesh-out game which will eventually get released.

Since this includes major reworks of the prototype we created so far, we decided to no longer have

the demo freely available to play for the time being.

Technical Aspects

The primary goal of our project was to create a functional multiplayer game in Unreal, including

the networking of multiple computers, a lobby functionality, as well as a host-client setup using

a Steam API.

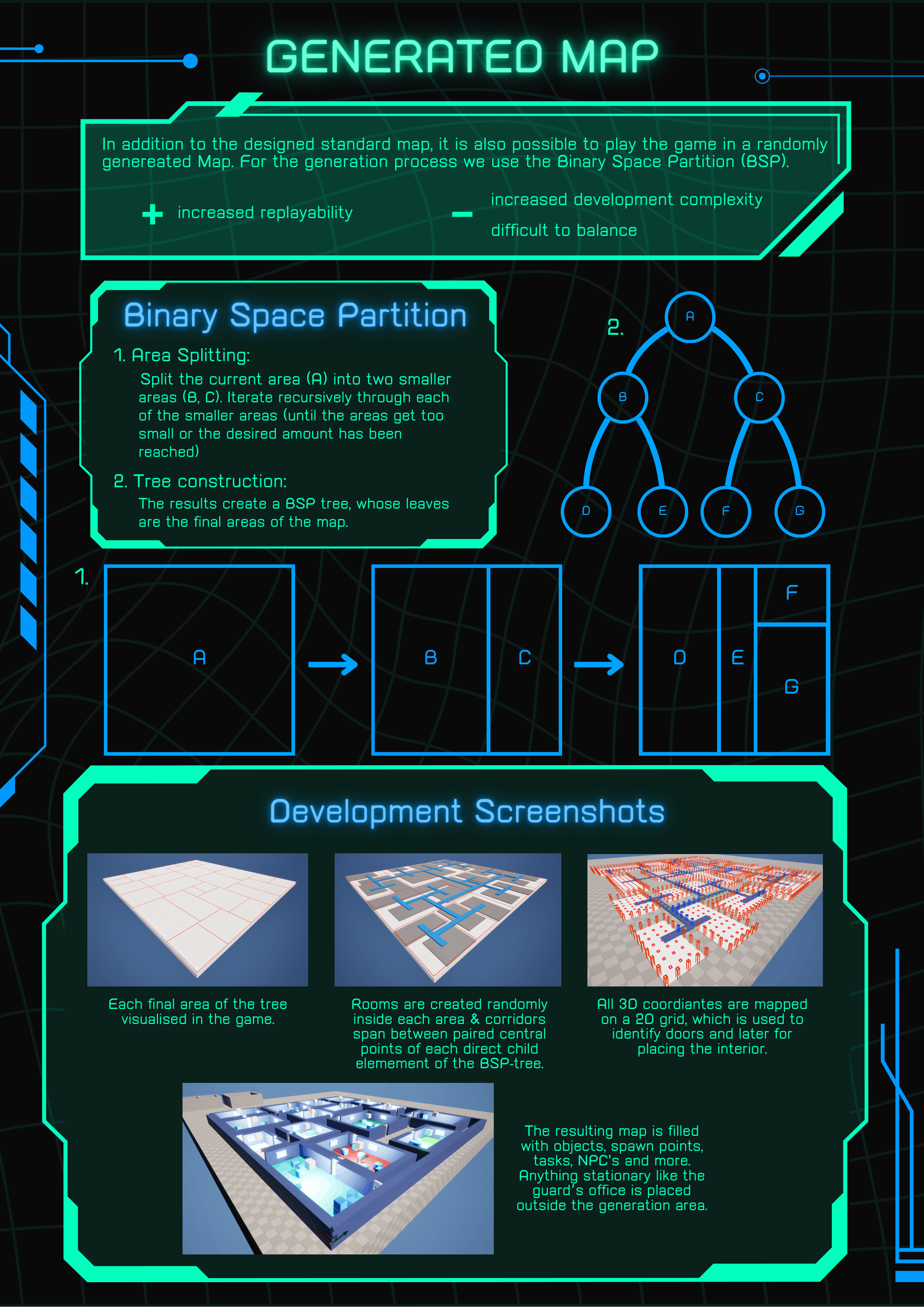

For our secondary goal, we implemented a level generation system, utilising a

grid based, procedural generation of rooms and walls. We additionally increased the randomness of

our game further with generated tasks for the agent and hacker to complete.

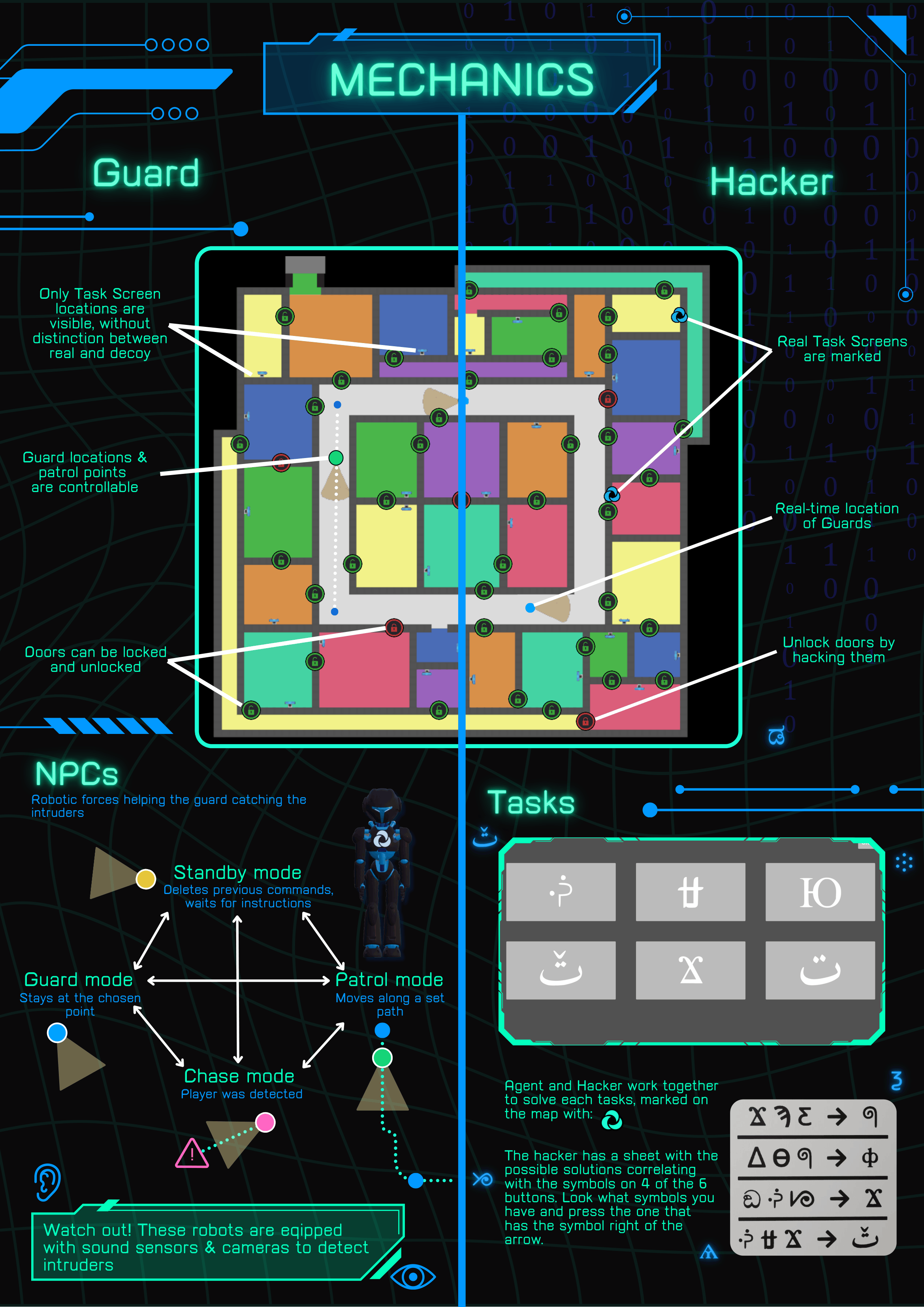

My responsibilities in this project were primarily the implementation of the NPCs, and any aspects

that were related to them, which included:

- setup of the dynamic NavMesh, reacting to level layout changing due to locked/unlocked doors

- Player detection through sight and sound sensors

- Agent emitting different sound-levels during running, walking or sneaking

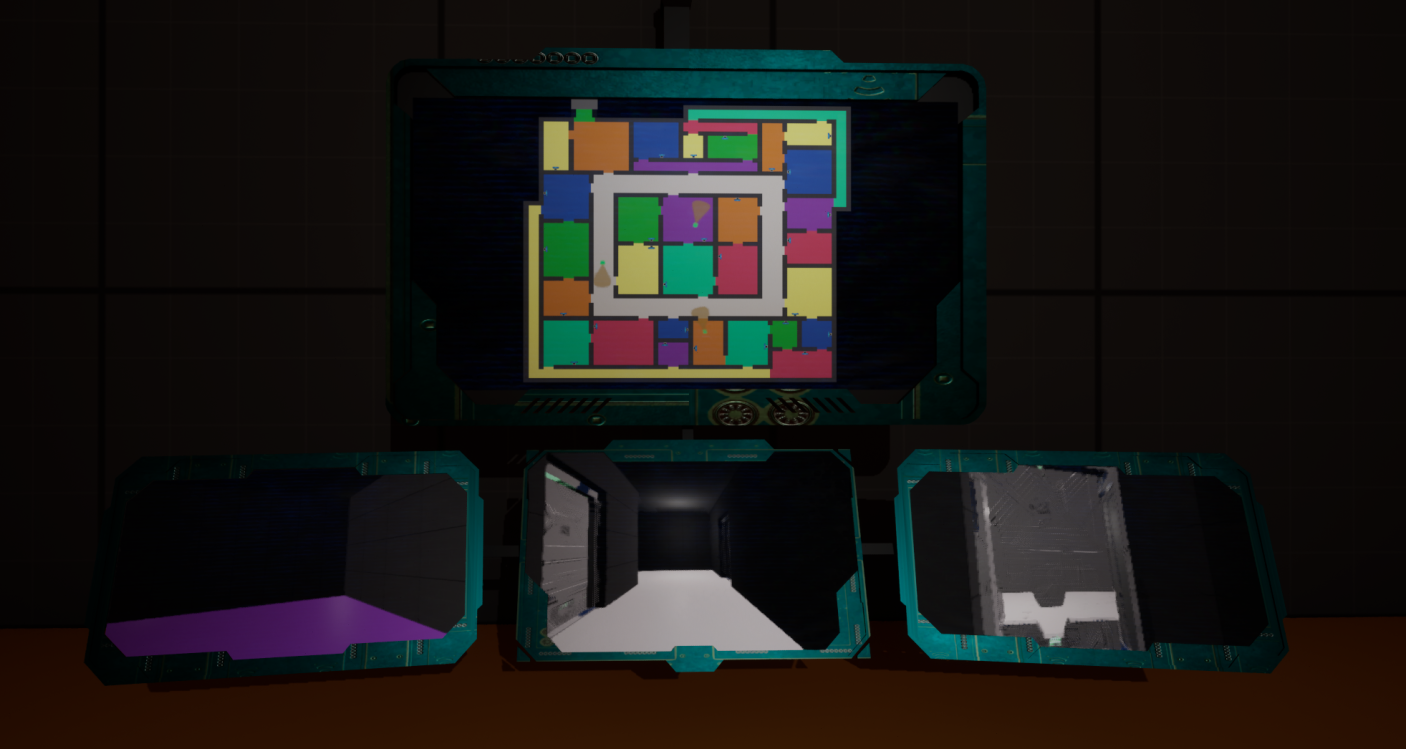

- streaming NPC view to RenderTarget textures, to display them on screen in Guard office

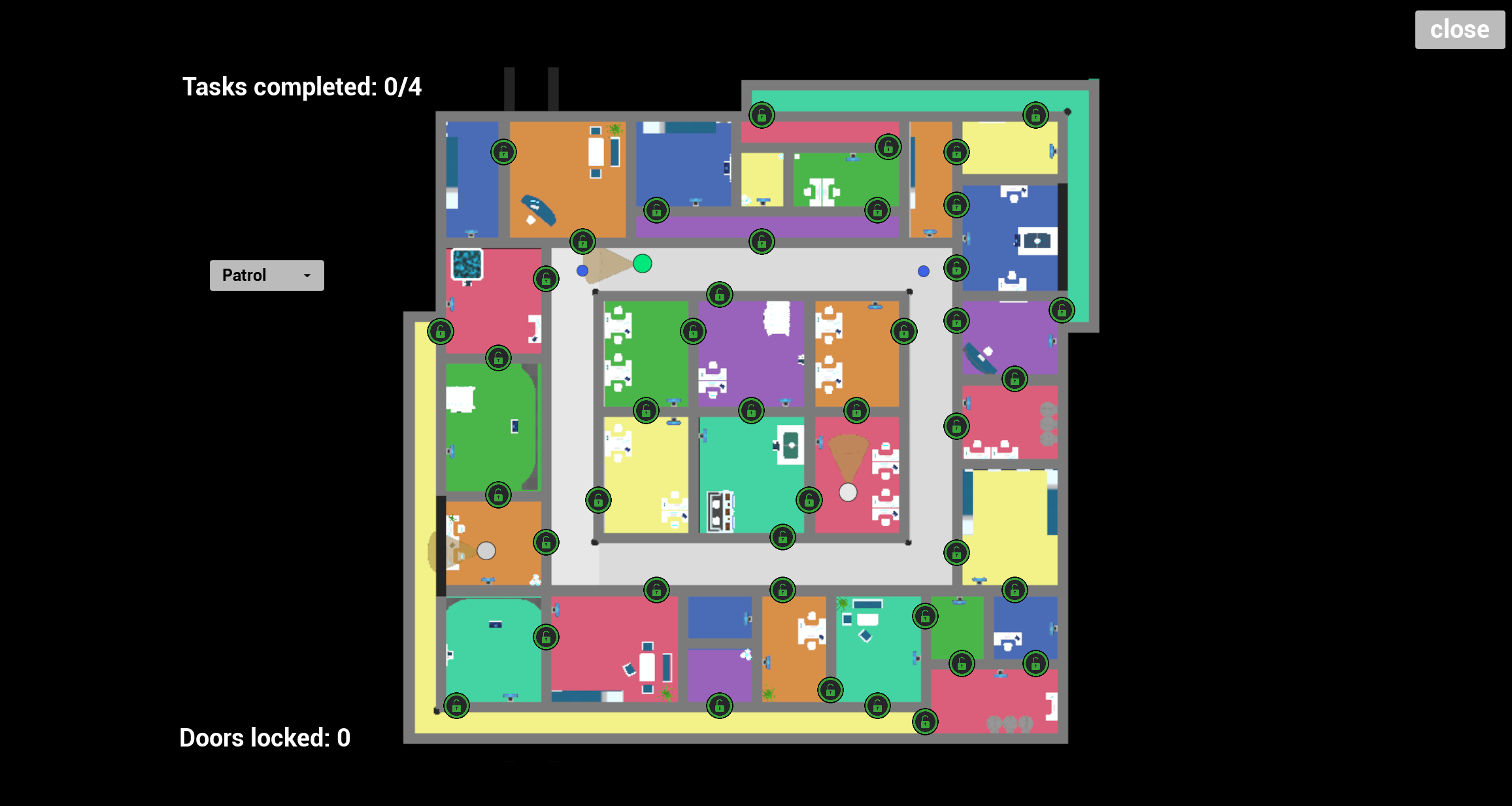

- Interactive Map UI

- displaying real-time location of all NPCs

- manually changing NPC states

- GUARD: move to and remains at position defined by player

- PATROL: continuously move along a player-defined route

- STANDBY: delete all previous commands and remain at current position

- CHASE: activated automatically whenever Agent is being detected, ignores all previous commands and pursuits the player, returns to previous state if Agents is lost again

- implementing external 3D models and retargeting animations to new rigs

- reworking droid model's materials to allow for matching recoloration and displaying of our logo

Gallery

and the POV of the individual droids on the smaller screens below

GUARD state animation |

Droid patrolling along route |

Droid chasing Agent |

STANDBY state animation |

|

|

|

|

Promotional posters created for our booth at our uni's project fair: